Building A Future-Proof, GxP-Compliant IT Infrastructure

By T.G. Prasanna, category specialist – IT & Telecom, Beroe, Inc.

In the pharmaceutical and clinical development sector, IT infrastructure is a regulated asset, wherein every server that processes clinical trial data, every storage system that archives electronic records, and every network component that transmits audit trails is subject to regulatory scrutiny.

Under FDA 21 CFR Part 11, computerized systems must maintain validated, tamper-evident records with controlled access and immutable audit trails.1 The EU equivalent, GMP Annex 11, imposes equivalent obligations on computerized systems supporting GxP activities.2. Both frameworks rest on a shared principle: The integrity, availability, and traceability of electronic records must be demonstrable at any moment, including during an unannounced regulatory inspection.

The ISPE GAMP 5 Second Edition (2022) extends this further by categorizing infrastructure components as Category 1, the lowest-risk but most foundational layer on which higher-risk clinical systems depend. A failure at the infrastructure level cascades upward: A misconfigured storage system invalidates the audit trail; a non-redundant server environment creates a single point of failure; and an undocumented network change breaks the chain of custody for clinical data.3

For emerging biotechs, infrastructure procurement must begin with a compliance lens not a features list. The cost of remediating a compliance gap after an inspection finding consistently exceeds the cost of building it correctly at the outset.

The Three Infrastructures For GXP-Compliant Operation

A. Data Center Infrastructure: Build, Co-locate, Or Cloud?

For most emerging biotechs, the question is not whether to invest in data center infrastructure but which deployment model is most appropriate given their compliance obligations, budget constraints, and growth trajectory. Three models dominate the landscape:

- On-premises environments give organizations full control over physical access, change control, and validation documentation but carry high capital expenditure and operational overhead that most early-stage companies cannot sustain.

- Co-location facilities provide dedicated hardware in a third-party data center, balancing cost efficiency with control, but require a robust supplier qualification agreement (SQA) confirming that the provider meets GxP environmental and security standards.

- Cloud-native platforms (AWS, Azure, GCP) offer elastic scalability and pre-certified compliance frameworks such as ISO 27001, SOC 2 Type II, but GxP validation responsibility remains with the sponsor organization not the cloud provider. The FDA’s 2024 guidance on electronic systems in clinical investigations confirms that computerized system validation obligations cannot be delegated to a cloud service provider.1

Procurement teams, therefore, should assess vendor compliance documentation rigorously before finalizing any data center partnership, including audit reports, uptime SLAs, data residency certifications, and disaster recovery procedures. A co-developed qualification plan between IT and quality assurance is essential at this stage.

B. Storage Virtualization: Compliance Without Complexity

Storage virtualization abstracts physical storage resources into a unified, manageable layer, enabling organizations to scale capacity, implement tiered storage policies, and maintain consistent backup and recovery protocols without continuous hardware investment. In a GxP context, storage virtualization systems must meet three nonnegotiable requirements:

- Immutability: Raw clinical data must be written to append-only or write-once storage layers that prevent unauthorized modification, directly satisfying the ALCOA+ principles of originality and accuracy.2,3

- Auditability: Every read, write, and administrative action on the storage layer must generate a timestamped, user-attributable log that can be presented to inspectors on demand.

- Disaster recovery: Storage systems must support tested, documented recovery time objectives (RTOs) and recovery point objectives (RPOs) aligned with the criticality of the GxP data they hold.

Procurement teams must require formal validation support packages from storage vendors, including installation qualification (IQ), operational qualification (OQ), and performance qualification (PQ) documentation. Vendors unable to supply this evidence introduce unacceptable compliance risk regardless of their commercial pricing advantage.

C. Compute Infrastructure: Right-Sizing For Regulatory Workloads

Server selection for GxP workloads involves considerably more than processing power and memory specifications. For emerging biotechs running CTMS, EDC, ePRO, LIMS, and bioinformatics pipelines concurrently, server environments must support:

- Change control integration: Any modification to server configurations, whether they are firmware updates, capacity changes, or operating system (OS) patches, such modifications must be documented, reviewed, and approved through a formal change control process before implementation in a production GxP environment.1,3

- High-availability architecture: Clustering and failover configurations minimize unplanned downtime that could compromise data integrity or clinical operations continuity.

- Environment separation: Dedicated development, validation (testing), and production environments must be maintained to prevent uncontrolled changes from reaching live GxP systems.2

The FDA’s Computer Software Assurance (CSA) final guidance, issued in September 2025, reinforces that validation effort must be proportional to the risk level of the system, but that does not reduce the obligation to validate infrastructure supporting GxP activities.4

Hidden Cost Drivers In GXP IT Procurement

One of the most persistent challenges for emerging biotechs is the gap between quoted technology costs and true total cost of ownership (TCO). In GxP environments, several cost dimensions are consistently underestimated at the procurement stage:

- Validation and qualification costs that cover IQ/OQ/PQ documentation, ongoing periodic system reviews, and change control management are routinely excluded from vendor quotes. While the quantum varies by system complexity and organizational maturity, practitioners across life sciences consistently identify validation overhead as a material and underestimated cost driver when deploying GxP IT systems

- Ongoing compliance maintenance, including software update validation, audit trail reviews, as well as access control audits, represents a recurring operational expense that must be budgeted annually, not treated as a one-time deployment overhead.

- Vendor audit and oversight requirements under GxP mean that any infrastructure supplier who touches or stores clinical data must be periodically qualified. The cost of conducting and documenting supplier audits is frequently invisible in early-stage IT budgets.

- Data growth scaling costs in cloud environments can escalate sharply as clinical trial data volumes expand. A study published in Nature Reviews Genetics projected life sciences data generation reaching multi-exabyte scales annually by the mid-2020s, driven by sequencing, imaging, and real-time analytics.5 Biotechs that fail to model data growth trajectories at procurement often face unexpected cost escalations during active clinical programs.

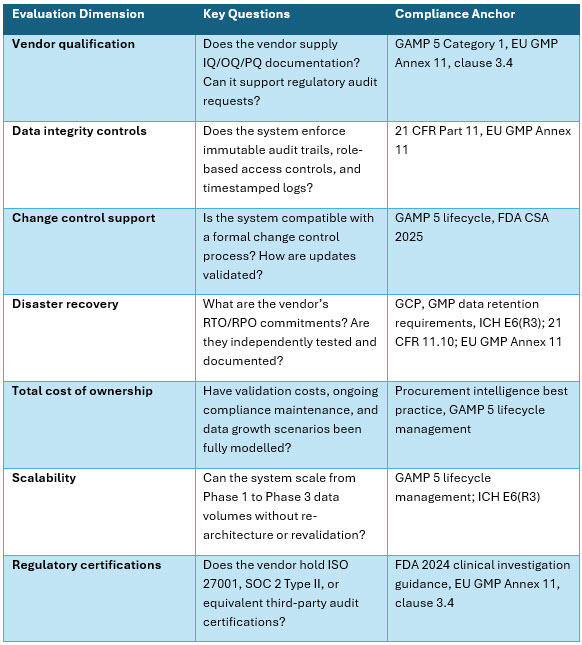

A Practical Procurement Evaluation Framework

The following framework provides structured evaluation dimensions that procurement and IT leaders at emerging biotechs can apply when assessing foundational GxP IT infrastructure candidates.

Governance By Design: Embedding Compliance Into Procurement

The most durable GxP-compliant infrastructures are not those remediated after an inspection finding but those in which compliance requirements were embedded into vendor selection criteria, contract language, and qualification agreements from the outset. Several principles should guide this governance-by-design approach:

- Establish a validation master plan (VMP) before procurement. The VMP defines the validation strategy, scope, responsibilities, and acceptance criteria across the entire infrastructure portfolio. It provides a compliance baseline against which every new vendor and system can be consistently assessed.

- Require compliance documentation as a procurement gate. Any vendor unable to supply validation support documentation, security certifications, and audit trail architecture evidence should not advance past the initial evaluation stage, regardless of commercial attractiveness.

- Model total compliance cost alongside technology cost. Procurement decisions optimized on unit price while ignoring validation, audit, and maintenance overhead frequently generate higher total costs and greater regulatory risk, over a three- to five-year program horizon.

- Build scalability into the compliance architecture from day one. As biotechs progress from discovery through Phase 1, 2, and 3 trials, data volumes, user populations, and regulatory scrutiny all increase substantially. Infrastructure selected for Phase 1 must be architected and validated to scale, not replaced under time pressure during active clinical phases.

The FDA’s 2024 guidance on data integrity enforcement reinforces this urgency. CDER warning letters rose 50% in FY2025, with data integrity deficiencies, including uncontrolled deletion of electronic data, failure to review audit trails, and inadequate backup procedures, among the most frequently cited findings.1,4 For emerging biotechs, these are operational consequences of procurement decisions made months or years earlier.

Conclusion

Building a GxP-compliant IT infrastructure is not fundamentally a technology challenge but a strategic procurement discipline for many biotechs. The choices made at the infrastructure layer directly determine whether clinical data can be trusted, protected, and defended before regulators at any point in a drug development program.

By applying a structured evaluation framework, modeling total compliance costs honestly, demanding vendor qualification evidence as a nonnegotiable procurement gate, and embedding governance requirements into infrastructure selection from day one, procurement and IT leaders can build a foundation that supports not just the current clinical program but the organization’s entire development trajectory, without overextending limited research budgets.

The pharmaceutical sector has learned, often through costly inspection findings and program delays, that compliance cannot be retrofitted. For the next generation of biotechs, the opportunity and the obligation is to build it right from the start.

References:

- U.S. Food and Drug Administration. Electronic Systems, Electronic Records, and Electronic Signatures in Clinical Investigations: Questions and Answers. Guidance for Industry. October 2024. https://www.fda.gov/regulatory-information/search-fda-guidance-documents/electronic-systems-electronic-records-and-electronic-signatures-clinical-investigations-questions

- European Commission. EudraLex Volume 4, GMP Guidelines, Annex 11: Computerised Systems. European Union, 2011 (draft revision 2025). https://health.ec.europa.eu/medicinal-products/eudralex/eudralex-volume-4_en

- International Society for Pharmaceutical Engineering (ISPE). GAMP 5: A Risk-Based Approach to Compliant GxP Computerized Systems, Second Edition. July 2022. https://ispe.org/publications/guidance-documents/gamp-5-guide-2nd-edition

- U.S. Food and Drug Administration. Computer Software Assurance for Production and Quality System Software. Final Guidance. September 24, 2025. https://www.fda.gov/regulatory-information/search-fda-guidance-documents/computer-software-assurance-production-and-quality-system-software

- Langmead, B. & Nellore, A. Cloud computing for genomic data analysis and collaboration. Nature Reviews Genetics, 19, 208–219 (2018). https://www.nature.com/articles/nrg.2017.113

About The Author:

T.G. Prasanna is a category specialist in IT hardware at Beroe Inc., a globally recognized procurement intelligence firm serving Fortune 500 clients across industries including life sciences and pharmaceuticals. With nearly a decade at Beroe and more than 15 years of post-MBA experience spanning market research and strategy consulting, he specializes in data center infrastructure, cloud infrastructure, and storage technologies, delivering market intelligence, cost structure analysis, and vendor capability assessments that help procurement leaders make informed, defensible technology decisions. Prior to Beroe, he served as a research analyst at Accenture, supporting growth and strategy initiatives across the electronics and high technology, and aerospace and defense sectors.

T.G. Prasanna is a category specialist in IT hardware at Beroe Inc., a globally recognized procurement intelligence firm serving Fortune 500 clients across industries including life sciences and pharmaceuticals. With nearly a decade at Beroe and more than 15 years of post-MBA experience spanning market research and strategy consulting, he specializes in data center infrastructure, cloud infrastructure, and storage technologies, delivering market intelligence, cost structure analysis, and vendor capability assessments that help procurement leaders make informed, defensible technology decisions. Prior to Beroe, he served as a research analyst at Accenture, supporting growth and strategy initiatives across the electronics and high technology, and aerospace and defense sectors.