Safe And Sustainable DCTs and Hybrid Trials (Part 2)

By Kannan Ramachandran, category specialist - Cloud Computing & Data Center, Beroe, Inc.

Part 1 of this series demonstrated why cloud infrastructure has become foundational to hybrid and decentralized trials enabling remote data capture, continuous device streaming, and multiregional oversight while reducing operational friction across distributed participants and vendors. But while cloud capabilities unlock scalability, they also expose new risks around latency, auditability, data drift, and fragmented oversight. Addressing these challenges requires not just strong infrastructure, but an intentional operating model that ensures reliability, compliance, and end‑to‑end traceability.

Part 2 shifts from the “why” to the “how.” In this section, we outline the practical architectural patterns, governance structures, and operating mechanisms that allow sponsors to run hybrid and decentralized trials with discipline and inspection‑ready rigor. We explore cloud‑native integration approaches that minimize reconciliation burden, governance‑by‑design practices that embed ALCOA+ principles into every workflow, workload placement strategies that optimize performance and compliance, and operating models that replace ad hoc oversight with predictable, data‑driven execution.

Together, these elements form an actionable blueprint for scaling decentralized and hybrid trial programs sustainably by balancing innovation with the reliability and regulatory confidence required for global deployment.

Pitfalls No One Owns Until Inspectors Ask

Even with strong cloud foundations, hybrid and decentralized trials face hidden operational risks that often surface only during audits or inspections. These risks typically arise not from the data sources themselves but from gaps in orchestration, monitoring, and oversight across distributed components.

A. Latency and Connectivity Gaps

DCTs rely heavily on participants in diverse geographies, including rural or low bandwidth regions where devices may struggle to synchronize data reliably. This creates delays in ePRO/eCOA submissions, wearable streams, and large imaging uploads, affecting both data freshness and the ability of study teams to take timely action. Sponsors consistently identify these latency driven delays as operational burdens, particularly as remote data volume accelerates.1

Practical platform guidance released in 2025 also reinforces the importance of SLOs, retry strategies, resumable uploads, and regional API endpoints to stabilize performance under variable network conditions.5 These safeguards ensure that remote data flows remain resilient even when participants are offline or intermittently connected.

B. Monitoring Blind Spots

As trials adopt continuous biomarker and sensor-based monitoring, the sheer volume of data can obscure critical issues such as missingness, drift, or device malfunctions unless the cloud architecture includes strong observability. Effective monitoring requires a combination of range checks, trend detection, data freshness metrics, and device heartbeat telemetry to uncover hidden gaps before they compromise protocol adherence. Real-world DCT case studies highlight that the most successful programs rely on always-on dashboards, automated exception routing, and proactive alerts that surface anomalies early.3

At a broader level, academic evaluations emphasize that decentralized research still needs more mature methodological validation frameworks to keep pace with increasingly digital data flows, which adds urgency to adopting robust quality and monitoring practices.2

C. Fragmented Audit Trails And Vendor Drift

Because DCTs frequently involve multiple SaaS platforms, such as EDC, eCOA, eConsent, RTSM, and imaging portals, safety systems audit trails are often fragmented across vendors. Without unified audit logging, traceability becomes inconsistent, making it difficult to demonstrate exactly who performed which action, when, where, and why across all systems involved.

Industry analyses from 2023–2024 show that auditors routinely flag gaps in TMF completeness, missing remote visit documentation, and insufficient audit trail robustness, especially in vendor managed components.4 Regulatory workshops involving the FDA, MHRA, and Health Canada further underscore that decentralized elements still carry full GCP expectations, meaning sponsors, not vendors, are ultimately responsible for oversight and data integrity.6

Inspection reality: Sponsors must be prepared to reconstruct full cross system traceability, not just within the EDC, because regulators increasingly scrutinize every integration hop.4,6

4. Integration Patterns That Reduce Reconciliation And Inspection Risk

Hybrid and decentralized trials rely on multiple interconnected systems such as EDC, eCOA, eConsent, RTSM, imaging, safety, and device data platforms. Without a coherent integration strategy, these systems drift out of sync, causing reconciliation challenges, data inconsistencies, and audit vulnerabilities. Cloud-native integration patterns offer a more reliable and inspectable way to orchestrate data across this distributed ecosystem, ensuring that operational signals remain consistent and regulators can trace every data transformation end to end.

4.1 API First Architecture With Idempotency

Modern DCT platforms increasingly adopt versioned, well documented APIs to standardize how systems exchange data. Idempotent operations ensure that retries (caused by network interruptions or system delays) do not produce duplicate records, a critical safeguard when combining ePRO/eCOA entries, device data, or visit events across remote participants.5

4.2 Event-Driven Orchestration Instead Of Point-To-Point Pipelines

Publish/subscribe designs decouple systems so that EDC, eCOA, RTSM, and logistics platforms respond to events (e.g., “Visit submitted,” “Drug dispensed,” “Device offline”) instead of relying on brittle, manual, or nightly file drops. This reduces integration failures and simplifies inspection because each event is logged, timestamped, and recoverable.5

4.3 Data Contracts To Prevent Schema Drift

Data contracts define required fields, units, enumerations, null rules, and validation logic for every upstream data producer from wearable vendors to site reported assessments. Versioning these contracts and testing them like code prevents schema drift, which is one of the most common causes of reconciliation inconsistencies in decentralized ecosystems.5

4.4 Operational Data Store (ODS) As A Single Operational Truth

An ODS provides a curated near-real-time view of operational signals (visit status, ePRO compliance, device uptime, shipment events) without overloading source systems. This unified layer allows study managers, CRAs, and safety teams to make decisions based on synchronized data instead of reconciling multiple disconnected vendor feeds.5

4.5 Automated, Rules-Driven Reconciliations

Rather than relying on manual spreadsheets, automated rules compare expected vs. observed states across systems, e.g., an ePRO entry indicates a visit occurred, but the corresponding CRF is missing in EDC, or a device heartbeat indicates usage, but no data has been ingested. Exceptions routed into work queues with SLA timers create a defensible audit trail of issue resolution. Audit readiness reports consistently emphasize that automated reconciliation and documented exception handling materially reduce inspection findings.4

5. Governance By Design (ALCOA+ Without The Pain)

As hybrid and decentralized trials scale, governance can no longer rely on manual documentation or post hoc cleanup. Instead, compliance must be embedded directly into the architecture to ensure that every data set, transformation, and system interaction inherently satisfies regulatory expectations. This “governance by design” approach aligns with evidence from 2024–2026 analyses, all of which emphasize the need for operationally mature, cloud-native oversight mechanisms1,2 and reinforce the importance of robust auditability across decentralized ecosystems.4,6

ALCOA+ Through System Design

Cloud-based DCT pipelines make it possible to enforce attributable, legible, contemporaneous, original, accurate (ALCOA) data plus the “+” attributes: complete, consistent, enduring, and available. Immutable raw data layers and strong role-based access controls (RBAC) ensure that data capture is trustworthy from the moment it enters the system.

Lineage And Time Travel For Reconstruction

Every transformation, validation, or enrichment step should automatically store lineage metadata, enabling teams to reconstruct “as was” data sets for any given point in time. This is crucial for demonstrating traceability during inspections, especially for decentralized workflows.2

Central Audit Bus for End-to-End Traceability

Instead of fragmented logs scattered across vendors, a centralized audit bus aggregates who, what, when, and where metadata from all integrated systems. This ensures sponsors, not vendors, retain a complete inspection-ready view of system activity.4,6

Validation And Change Control Embedded In The Workflow

Cloud-native validation pipelines, Dev/Test/Prod environment separation, automated release note capture, and scheduled mock inspections provide continuous assurance that systems remain compliant as integrations evolve. This matches the operational gaps highlighted in decentralized audit summaries.4

Privacy, Residency, and Consent Aligned Storage

Tokenization or pseudonymization techniques, combined with region-specific data stores, ensure that data residency obligations and participant consent boundaries are respected. These practices are emphasized across regulatory discussions on decentralized elements and digital oversight.6

6. Workload Placement: Edge Vs. On Premises Vs. Cloud

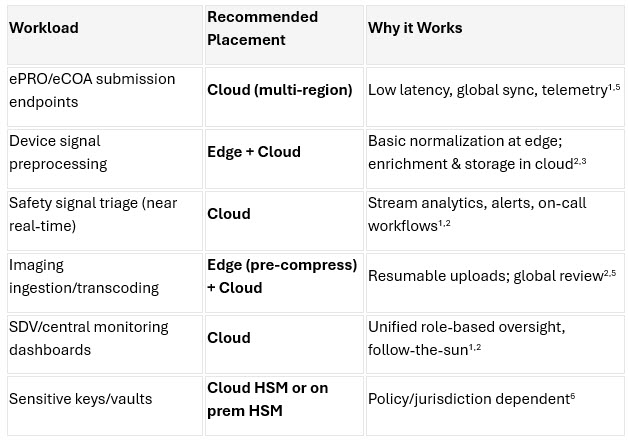

As hybrid and decentralized trials mature, sponsors must determine where each workload should run: at the edge, in the cloud, or on premises. No single location fits all use cases. Instead, performance, latency, compliance, and data flow requirements should drive placement decisions. Evidence from 2024–2026 shows that strategic workload placement significantly improves data reliability, reduces operational friction, and strengthens inspection readiness.1,2,6

To help sponsors optimize their technical architecture, the table below outlines common DCT workloads and the recommended execution environment for each, along with the rationale.

7. Operating Model For Hybrid/DCT Success Without Spreadsheets

Even with strong cloud architecture, DCTs cannot operate effectively without a modern coordinated operating model. Traditional spreadsheet-driven oversight breaks down under the volume, velocity, and variability of DCT data. Sponsors that excel in decentralized execution apply structured operational practices shared ownership across functions, clear SLOs, real-time observability, and routine inspection simulation to ensure data integrity and trial continuity. Evidence from operational commentaries, platform guidance, and audit/inspection analyses consistently reinforces the need for these disciplined practices in cloud-enabled DCT environments.1,4,5,6

7.1 TrialOps Pod: Cross-Functional Coordination

A centralized “TrialOps” pod brings together clinical operations, data management, safety, biostatistics, and IT/integration teams under shared KPIs. This cross-functional group monitors real-time indicators, such as ePRO data freshness (≥99% within 15 minutes), device heartbeat stability, ingestion throughput, and reconciliation SLAs. By aligning teams around the same operational truth, DCTs run more predictably and with fewer quality surprises.1,5

7.2 Runbooks And SLOs For Every Critical Workflow

DCTs demand clear runbooks and service level objectives across ingestion, device monitoring, reconciliation cycles, and audit log completeness. These documents formalize roles, escalation paths, and expected performance thresholds, helping teams manage complexity and preventing ad hoc decision-making.5

7.3 Observability As A Core Function

In decentralized environments, integrations behave like products, not one-time implementations. Sponsors should maintain real-time dashboards, error budgets, integration health metrics, and on-call rotations. These practices ensure early detection of data drift, device outages, or ingestion stalls before they affect participant safety or regulatory timelines.1,4

7.4 Quarterly Mock Inspections

Given the fragmented nature of decentralized ecosystems, mock inspections are essential. Quarterly simulations ensure audit trails are complete, lineage can be reconstructed, vendor documentation is in order, and inspection responses are consistent. Audit findings in recent years show that sponsors who conduct routine mock inspections face fewer surprises during regulatory reviews.4,6

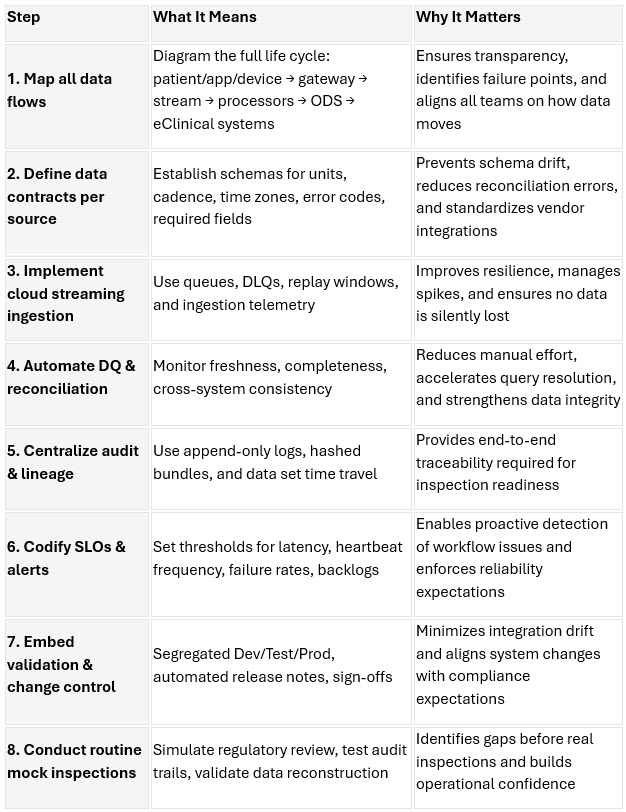

A Practical Blueprint For DCT Operational Excellence

To help sponsors operationalize hybrid and decentralized trial workflows consistently, the following blueprint summarizes the essential steps, their intent, and their value. This table is designed to be easily used as an internal guide or incorporated directly into study start-up materials.

Together, these blueprint steps provide a structured operational foundation for hybrid and decentralized trials. By combining cloud-native ingestion, proactive monitoring, automated reconciliation, and embedded governance, sponsors can reduce operational friction and maintain inspection readiness even as trial complexity and data volume increase. This blueprint is adaptable across therapeutic areas, outsourcing models, and regional footprints, making it a practical tool for any organization advancing DCT maturity.

Conclusion

Hybrid and decentralized trials require far more than patient-facing apps and disconnected digital touchpoints. Their success depends on a cloud-native operating model that supports reliable ingestion, normalization, quality management, auditability, and real-time oversight across globally distributed participants and vendors. Evidence from 2024–2026 literature and real-world implementations consistently shows that when sponsors establish strong data foundations, they dramatically reduce manual reconciliation, surface risks earlier, and reach inspection readiness by default.

With thoughtful workload placement, event driven integration patterns, and governance by design built into every layer of the architecture, sponsors can scale decentralized and hybrid trial models globally without compromising data integrity, participant safety, or regulatory confidence.

References:

- JAMA Network Open (April 2024): Remote Monitoring and Data Collection for Decentralized Clinical Trials — oncology sponsor adoption patterns and enabling technologies. https://jamanetwork.com/journals/jamanetworkopen/fullarticle/2817479

- PLOS Digital Health (Jan 2026): Decentralized clinical trials: a comprehensive analysis of trends, technologies, and global challenges — analysis of 1,370 U.S. registered DCTs. https://journals.plos.org/digitalhealth/article?id=10.1371/journal.pdig.0001191

- Real world DCT case roundup (2025): Real World Examples of Decentralized Clinical Trials Using Remote Monitoring — multi therapy DCTs using wearables, telemedicine, cloud dashboards. https://www.clinicalstudies.in/real-world-examples-of-decentralized-clinical-trials-using-remote-monitoring/

- Applied Clinical Trials / UW CTI (2023–2024): Decentralized Clinical Trials: Being Audit Ready and Avoiding Pitfalls — common DCT audit findings, TMF/documentation gaps, vendor oversight expectations. https://www.appliedclinicaltrialsonline.com/view/decentralized-clinical-trials-being-audit-ready-and-avoiding-pitfalls, https://uwclinicaltrials.org/2023/11/20/decentralized-clinical-trials-being-audit-ready-and-avoiding-pitfalls/

- Castor (2025): Decentralized Clinical Trial Platforms in 2025: A Practical Guide for Clinical Operations — integration, eConsent/eCOA/EDC orchestration, medical records retrieval, API callbacks. https://www.castoredc.com/insight-briefs/decentralized-clinical-trial-platforms-in-2025-a-practical-guide-for-clinical-operations/

- FDA/MHRA/Health Canada Workshop (Feb 2024): Clinical Trials with Decentralized Elements and GCP Inspections — inspection expectations and decentralized element definitions. https://www.fda.gov/media/177584/download

About The Author:

Kannan Ramachandran is a category specialist at Beroe Inc., a global procurement intelligence firm that partners with over 10,000 companies worldwide, including a majority of the Fortune 500. With deep expertise in cloud computing and data center infrastructure, Kannan advises enterprises on strategic sourcing, cost optimization, and digital transformation. His work is rooted in data-driven research and market intelligence, helping organizations make informed decisions in a rapidly evolving tech landscape.

Kannan Ramachandran is a category specialist at Beroe Inc., a global procurement intelligence firm that partners with over 10,000 companies worldwide, including a majority of the Fortune 500. With deep expertise in cloud computing and data center infrastructure, Kannan advises enterprises on strategic sourcing, cost optimization, and digital transformation. His work is rooted in data-driven research and market intelligence, helping organizations make informed decisions in a rapidly evolving tech landscape.